Members

Yukihito Yomogida, M.D., Ph.D.

Manager

Yukihito received his Ph.D. at Tohoku University in 2010. After working as a JSPS Research Fellow/ Research Fellow/Research Associate Professor at Tamagawa University, and a Section Chief at National Center of Neurology and Psychiatry, he joined Araya in 2021. His research expertise focuses on Neuroimaging techniques such as functional magnetic resonance imaging (fMRI).

Kotaro Nomura

Chief Researcher

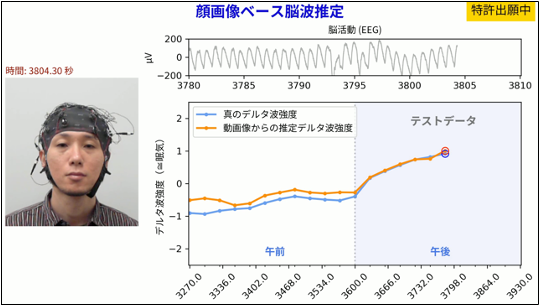

Nomura is a Chief Researcher at Araya. In his previous job, he has been studying pain using EEG. His research interests include neurotech using EEG and deep learning.

Chiaki Michibayashi

Junior Business Development Manager

Chiaki is a Junior Business Development Manager in Araya. After graduating from Hokkaido University in 2011, she received her M.Sci. in Kyoto University in 2013.

She worked as a researcher and business developer in Dermatology, Oral health care for 9 years in a consumables manufacturer company. She joined Araya in 2022. Now she focuses on business development in neuroscience.

Sensho Nobe

Chief Researcher

Sensho received his Master’s Degree in Neurobiology from the University of Tokyo in 2021. After a year as a Ph.D. student there / intern student at Araya, he officially joined Araya as a Researcher & Product Development Staff in 2022. His main research interests are: 1. developing neurotech products such as BCI using non-invasive neural recordings and machine learning, 2. exploring sources of human intelligence / consciousness using differential neural models.

Anna Maria Hadjiev

Senior Researcher

Anna is a Senior Researcher at Araya. She received her B.S. in Computer Science at the University of Wisconsin - Madison in 2019, and her M.S. in Information Science & Technology at Hokkaido University in 2022. Her research interests are natural language processing and artificial consciousness.

Yawara Haga, Ph.D.

Senior Researcher

Yawara studied brain science in primates using MRI at RIKEN CBS and received his Ph.D. (Radiology) from Tokyo Metropolitan University in 2022. After working at the RIKEN CBS as a post-doctoral fellow, he joined Araya in 2023. He is working on the analysis of brain imaging data.

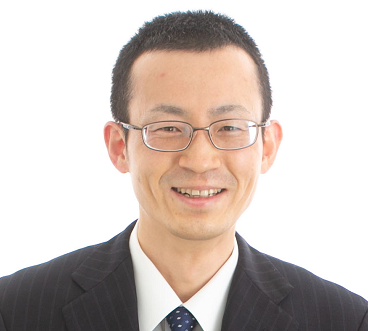

Daisuke Matsuyoshi, M.D., Ph.D.

Technical Advisor

Daisuke earned PhD from Kyoto University and started his postdoc career in the National Institute for Physiological Sciences. After working as an assistant professor at Osaka University, The University of Tokyo, and then Waseda University, he has worked as a research scientist at the National Institutes for Quantum and Radiological Science and Technology (formerly National Institute of Radiological Sciences) since 2019. His research interest includes visual cognition and its plasticity using a combination of behavioral and multi-modal neuroimaging techniques (fMRI, sMRI, dMRI, qMRI, MRS, and EEG/MEG). He joined Araya in 2015.

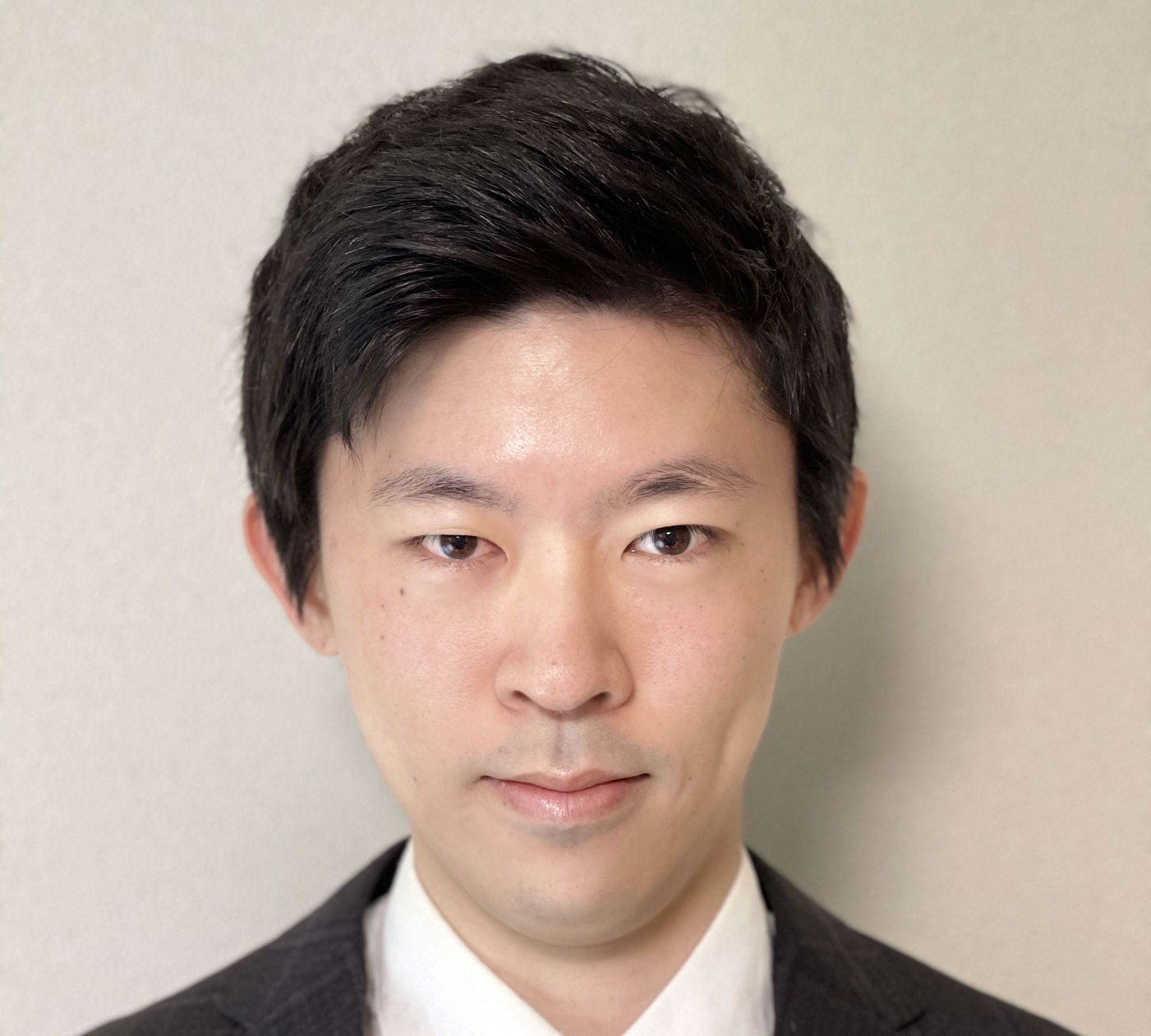

Keita Matsumoto

Leader

He is also the team leader of Araya's Construction and NeuroAI departments. He received his Master's degree in Information Science and Engineering from Waseda University, where he conducted research on 3D shape reconstruction of objects using images. He then worked in software development and R&D at Mitsubishi Electric Corporation, a general electronics manufacturer, before joining Alaya in 2019. Since joining the company, he has been engaged in research and development using reinforcement learning and imitation learning, predictive coding models, automation of construction machinery, and development of OSS (OptiNiSt).

Hiro Hamada, Ph.D.

Leader

Hiro is a Research Team Lead at Araya. He received his Ph.D in systems neuroscience at Okinawa Institute of Science and Technology (OIST) in 2019. His research interests are cognitive neuroscience, computational neuroscience and phenomenology of consciousness.

Yi-Chen Lin, Ph.D.

Senior Researcher

Yi-Chen is a Senior Researcher at Araya. She received her Ph.D. at National Yang-Ming University in 2019. Her research interest are cognitive neuroscience and neuroimaging.